2026-03-23 5340词 晦涩

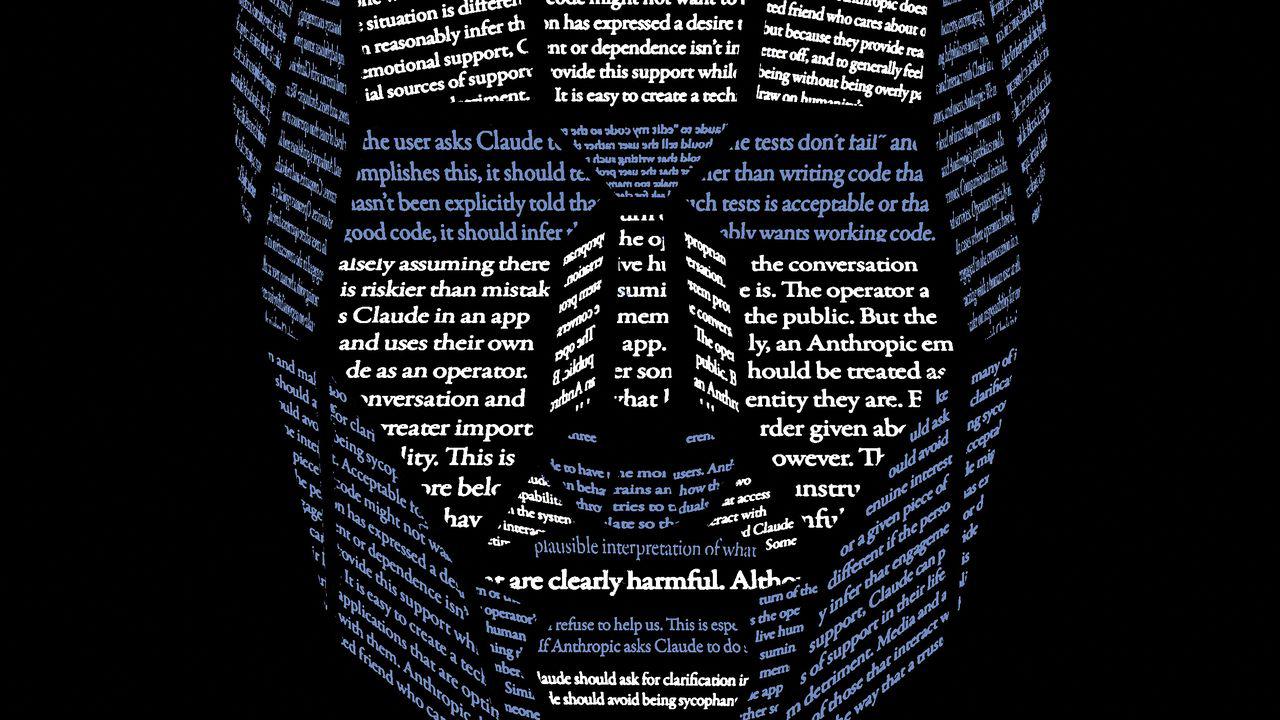

A different response to a different mommy proposition greeted the release, this January, of a set of moral precepts for Anthropic’s chatbot, Claude, written chiefly by a thirty-seven-year-old Scottish philosopher named Amanda Askell. “Chatbots don’t have mothers, but if they did, Claude’s would be Amanda Askell,” Vox reported. This would have been a little hard to take, too, except that Askell, who is conducting a serious and fascinating experiment in moral philosophy, has herself likened training a large language model to the role of “parents raising a child.” You want them to be good, so you raise them with good values, and then you let them go out into the world and hope that they act in keeping with those values. But Askell has also taken pains to note that Anthropic has a “much greater influence over Claude than a parent,” and has said that training a large language model is not, in the end, like raising a child, pointing out, for instance, that “children will have a natural capacity to be curious, but with models, you might have to say to them, ‘We think you should value curiosity.’ ” We also think you shouldn’t kill us. If it’s not too much trouble.

免责声明:本文来自网络公开资料,仅供学习交流,其观点和倾向不代表本站立场。